For the past few years, I have been experimenting with peer review in my high school History classes. The California Council for the Social Studies recently published my article about this just as I was conducting a peer review activity with my 10th grade World History students. In the article, I detail using a computer program called PeerGrade, which is great, but can add several days to a lesson because students have to type their work, submit it, and then conduct several peer reviews.

This post will showcase some student work in doing a peer review activity on paper in one class period. The essay was an argumentative task where students had to state a position about eugenics and support it with evidence from 15 Minute History and the Eugenics Archive. Before writing the essay, students shared the evidence they had categorized on a Vee Diagram. The peer review worksheet I created can be accessed in this Google Doc.

In two (50 minute) class periods of writing my 10th grade students produced an average of 361 words with 6 explanations of their evidence.

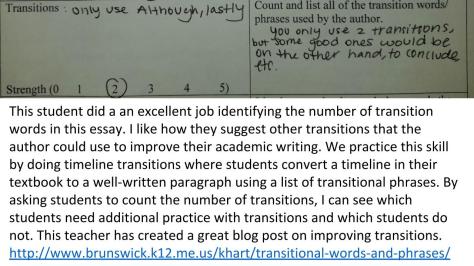

I stole this list from one of my awesome ELA teachers, Mandy Arentoft and will project it when giving students time to practice using transitions in their historical writing. Another great teacher, Keith Hart from Brunswick High School in Maine has a helpful blog post teaching students how to use transitional phrases to present their evidence.

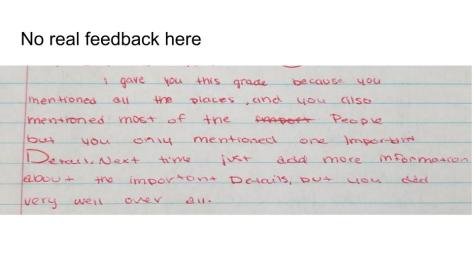

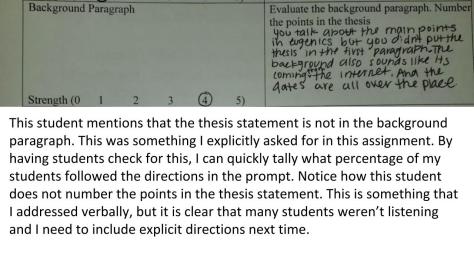

A benefit in using peer review is that I get immediate feedback from my students that tells me who is applying the skills from my mini writing lessons. In this case, I clearly need to go back and re-teach the importance of including a creative title, a complex thesis, and in using transitions. Fortunately, not everyone will need this instruction and I can create more advanced writing lessons for them in my next station rotation activity. For more information about peer review, please look at this #TeachWriting Twitter archive on the topic. It has a wealth of resources for teachers looking to implement peer review into their classroom writing instruction.